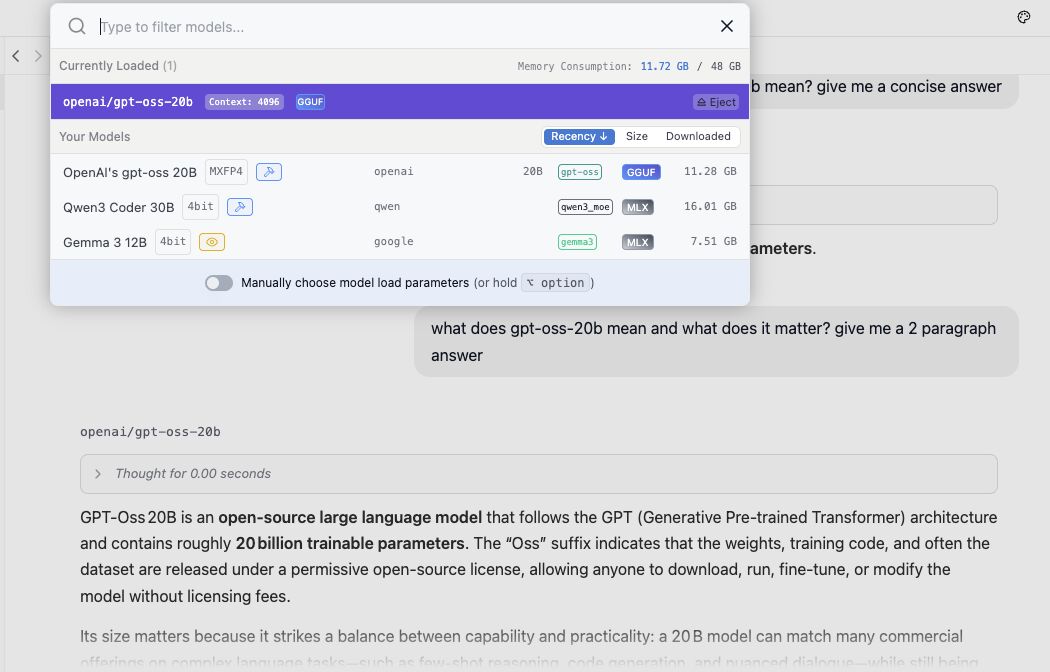

Figure 1: Local LLM configuration and setup

Figure 1: Local LLM configuration and setup

I've been experimenting with OpenAI's new open-weight model, gpt-oss-20b, on my Apple M4 Pro using LM Studio — and I'm genuinely impressed. This thing runs fast, respects your privacy (no data leaves your machine), and doesn't cost a cent beyond the initial download.

It holds its own against cloud-based giants like ChatGPT 5, especially for reasoning tasks, code generation, and even creative writing — all without an internet connection. In a world where AI often means "connect to someone else's infrastructure," this flips the script. You can build and test locally, no API calls or billing meters in sight.

There are tradeoffs — no live web data and some hardware requirements, but the benefits of local-first AI are growing fast. It feels like a glimpse into the future of decentralized intelligence.

💬 Are you running any open-weight models locally? I'd love to hear what's working for you and what you're building.